Our client is a social dating app with worldwide recognition and presence in over 100 countries.

Being very successful social media application with millions of subscribers and over a billion swipes per day, client’s engineering team was and still is challenged to release regular software updates across Android, iOS, and Web platform on a bi-weekly basis while keeping high standards of quality to satisfy its regular users.

Rate of changes per release was increasing drastically while product was pushing new ideas via A/B tests thus having only manual functional testing could not cater bi-weekly releases.

In 2016, PFLB was asked to join forces with the in-house engineering team to build what at the time was, native test automation solution using tools for automated ui testing – newly introduced XCUITest library for iOS and Espresso library for Android.

Only manual testing could not cater bi-weekly releases

because rate of changes was increasing drastically while new ideas pushing via A/B tests.

As a Result:

Success seemed to be achieved until our team start running into CI battles with devs.

Let’s examine a typical CI architecture:

CI with pre-merge tests ( Classical Case):

- GitHub repository with app codebase

- Jenkins CI

- Each PR and its consecutive commit is triggering a chain of checks against PR branch which include but not limiting to code compilation, unit tests, code style validation

- Checks described above are blocking PR merge — if one of the listed above checks fail, PR won’t be merged into main developing branch until the issue is addressed.

Challenge:

Add UI Automated tests for Android/IOS repositories to run along with other checks – compilation, unit tests, lint on each Pull Request

Problems:

- UITests due to its nature might be flaky. Flakiness could depend on many factors – USB connections on devices, internet connection

- Constant UI changes in the app lead to UITest failures and require constant updates in the tests code

- Since we are using native testing frameworks (Espresso, XCTest) for writing fast and reliable iOS and Android UI Tests, these tests reside in the same repository along with app’s code. Therefore when a developer makes PR and brakes one or more of these tests, the change in test or exclusion of broken test would require a commit or another PR. As a result, all checks would need to be run once again which is time consuming. Not only developer is blocked but also irritated since he might of change UI flow which made UI Test to react to such change ( false positive ) Unhappy and irritated developer will obviously opposed to run UI Tests in pre-merge manner and would fight for move UI Test to post merge execution.

Test Orchestrator

Solution:

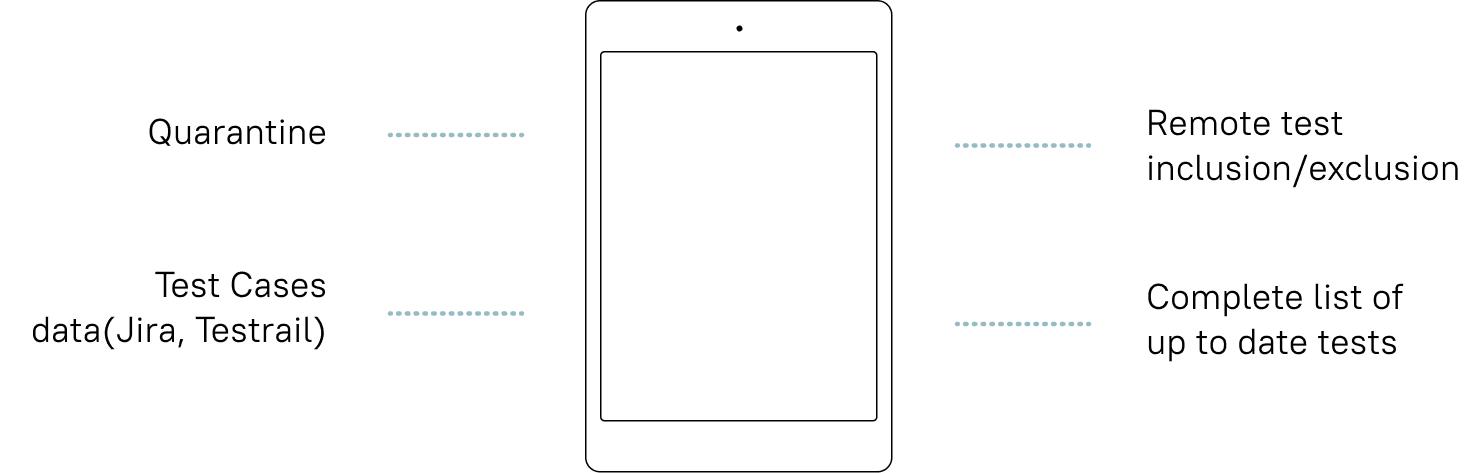

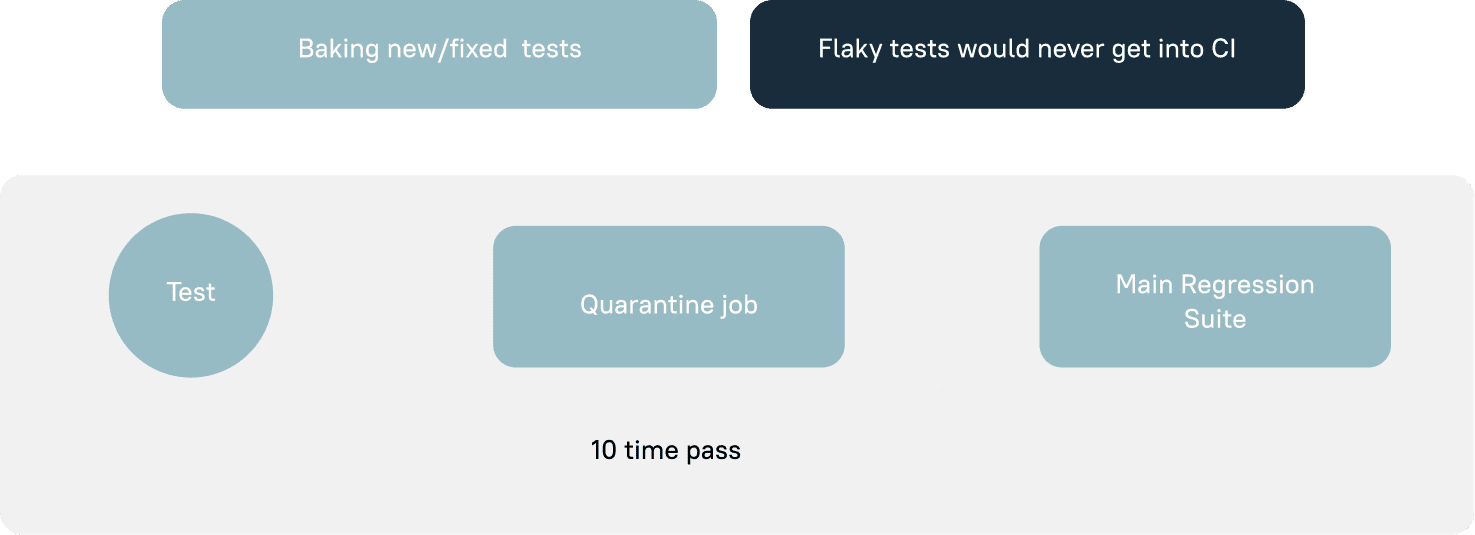

Test Orchestrator – a framework for managing tests in CI.

Use Cases:

01

Test fails in CI due to UI changes. Developer is blocked, although he did not brake test explicitly. Automation team or developer himself would disable test from Test Orchestrator’s web portal while opening a new Jira task for automation team to address test modification.

Quarantine

02

Test failed due to the actual bug which developer introduced in a Pull Request. After examining a new bug, the product team decided to fix it in the following sprint. Dev or QA engineer would perform the following actions:

- Developer or QA Engineer disables test from Test Orchestrator web portal and link Jira bug for reference.

- When the defect is fixed, the status of the test get updated and it moves to quarantine job for validation.

Conclusion

Looking back at all the work performed on the project, we can definitely call it a success. Despite having to deal with a constantly changing app and working on very tight deadlines, we managed not only successfully write and run multiple test cases, but also recognize the need and importance of changes in the process and subsequently come up with a solution that eliminates many issues associated with test automation.

All this helped our team recognize the importance of detailed planning, especially at the early stages of a project. In addition, working on a multinational team across different time zones and especially around tight deadlines gave us all an opportunity to work using Agile methodology and focus on the quality of the end product for our client. In doing all that, we not only helped deliver a better product or improved a few metrics, but our team also managed to learn and grow as well.