Software teams often face a challenge when certain parts of a system aren’t ready, unstable, or too costly to call during testing. That’s what mock testing is for. By simulating dependencies, engineers can verify functionality without relying on real services. For many, understanding mock test meaning provides clarity: it’s about creating safe, controllable environments for validating behavior. This article explains what is a mock test, how it works, and why it matters. You’ll also discover the main types, benefits, and how mocking helps in broader QA practices. If you’ve ever wondered what is the meaning of mock test, this guide gives you the full picture.

Key Takeaways

- Define mock testing to clarify controlled test environments.

- Highlight mock test meaning and practical engineering use.

- Show how mocks accelerate development and speed up debugging.

- Explain benefits for performance testing services and efficiency.

- Stress that mock testing works best as part of the whole process.

What is Mock Testing?

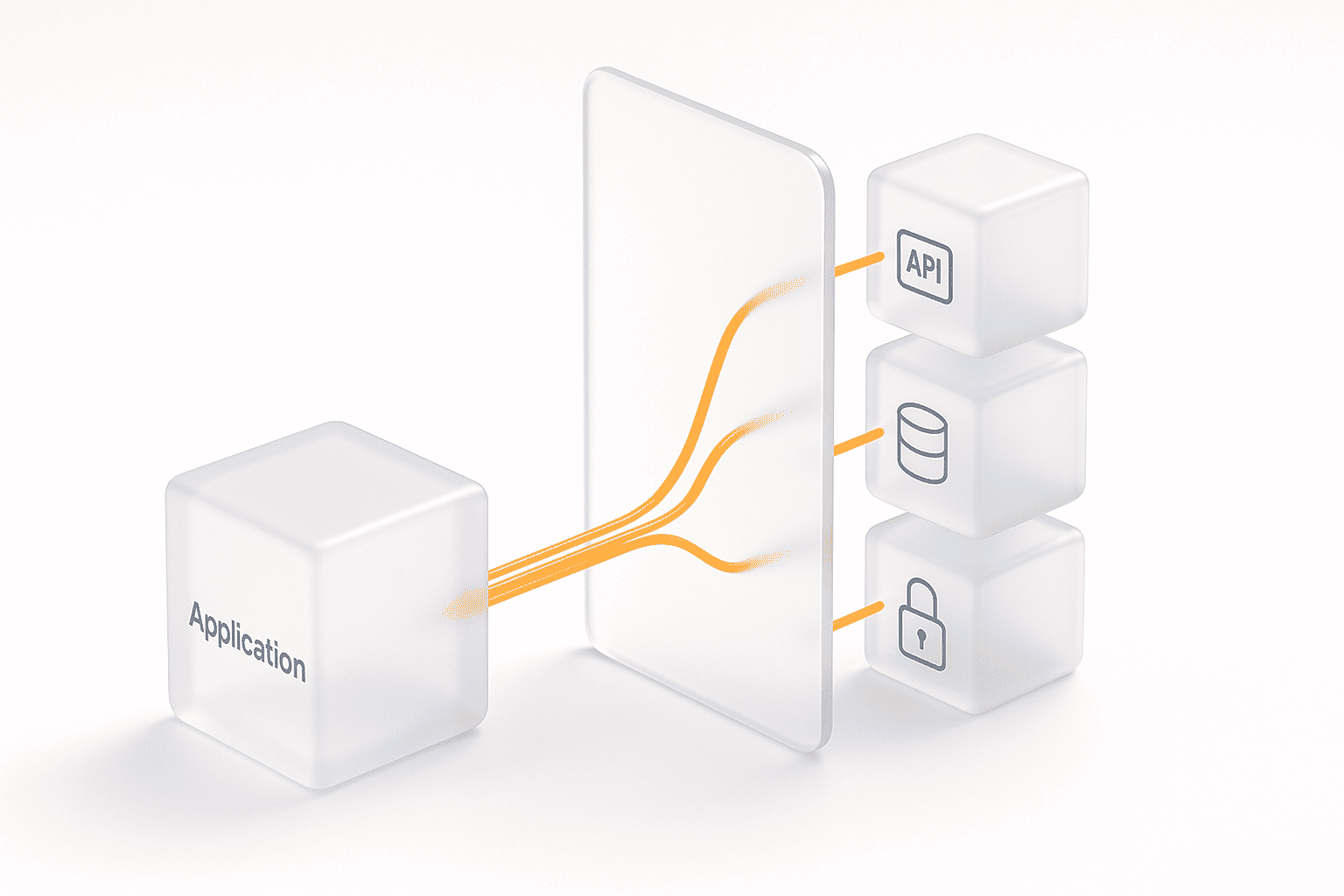

Mock testing is a software testing approach where real components, such as APIs, databases, or external services, are replaced with simulated versions called mocks. These mocks mimic the behavior of actual dependencies, allowing developers and QA teams to test applications in isolation.

The mock testing approach is especially valuable when external systems are unavailable, expensive to access, or too complex to configure during early development stages. Instead of waiting for every service to be fully functional, teams can simulate expected inputs and outputs.

In simple terms — it’s the practice of creating stand-ins for real systems so tests can run in a controlled, predictable way. This improves test reliability, shortens feedback cycles, and ensures problems are caught earlier.

While mocks don’t replace real-world validation, they complement it by providing stable test environments and act as an integral part of performance testing.

Learn more: What is performance testing

How Does Mock Test Work?

Mock testing follows a structured process to replace real dependencies with controlled simulations. Here’s a step-by-step breakdown of how it typically works in engineering practice:

- Identify Dependencies

The first step is recognizing which components are either unavailable, unreliable, or too expensive to call during testing. These can include third-party APIs, payment gateways, databases, or legacy services. Engineers decide what needs to be mocked by evaluating dependency stability, response variability, and cost of access. - Select Mocking Method

Once the target is clear, the right mocking strategy is chosen. For example, API-based mocks are common in distributed systems, while database mocks are preferred in data-heavy apps. The choice depends on language support, framework tooling, and whether the test requires static stubs or dynamic responses. - Configure Mock Behavior

This step involves defining request/response pairs, error codes, and edge cases. Engineers may configure latency injection to test timeout handling or return malformed payloads to validate error resilience. Tools often provide DSLs (domain-specific languages) or configuration files to describe how mocks should behave under specific conditions. - Integrate into Test Suite

The mocks are then wired into the unit, integration, or performance testing pipeline. Integration typically involves updating environment variables, redirecting service calls, or injecting mock objects via dependency injection. This ensures that test runs consistently target the mocked versions instead of live services. - Execute Tests with Isolation

With mocks in place, the application runs against a controlled test environment. Engineers validate expected outputs, confirm error handling, and measure performance without interference from external systems. Because mocks are deterministic, test outcomes remain repeatable, which is critical for CI/CD pipelines. - Analyze and Iterate

After execution, test results are compared against baselines. Engineers look for regressions, unexpected behavior, or missing cases. If gaps are found, mocks are adjusted — adding new responses, refining error cases, or simulating new performance scenarios. The iterative process ensures the mock test setup evolves with the system.

Examples:

1) Java (JUnit + Mockito): mock an external API client

// Gradle: testImplementation("org.mockito:mockito-core:5.+")

class WeatherClient {

String fetch(String city) { /* calls real HTTP */ return ""; }

}

class WeatherService {

private final WeatherClient client;

WeatherService(WeatherClient client) { this.client = client; }

String todaysForecast(String city) {

String json = client.fetch(city);

// parse json… simplified:

return json.contains("rain") ? "Bring umbrella" : "All clear";

}

}

import static org.mockito.Mockito.*;

import static org.junit.jupiter.api.Assertions.*;

import org.junit.jupiter.api.Test;

class WeatherServiceTest {

@Test

void returnsUmbrellaAdviceWhenRaining() {

WeatherClient client = mock(WeatherClient.class);

when(client.fetch("London")).thenReturn("{\"cond\":\"rain\"}");

WeatherService svc = new WeatherService(client);

assertEquals("Bring umbrella", svc.todaysForecast("London"));

}

}Why this works

We replace the network dependency with a

mockthat returns a deterministic payload.

2) Java (WireMock): mock HTTP API in integration tests

// testImplementation("com.github.tomakehurst:wiremock-jre8:2.+")

import static com.github.tomakehurst.wiremock.client.WireMock.*;

import com.github.tomakehurst.wiremock.WireMockServer;

WireMockServer wire = new WireMockServer(8089);

wire.start();

configureFor("localhost", 8089);

stubFor(get(urlEqualTo("/v1/users/42"))

.willReturn(aResponse()

.withStatus(200)

.withHeader("Content-Type","application/json")

.withFixedDelay(150) // latency injection

.withBody("{\"id\":42,\"name\":\"Ada\"}")));

//// Your app under test calls http://localhost:8089/v1/users/42 ////

wire.stop();Good for

Integration tests, fault injection (latency, 500s), and CI reproducibility.

3) Python (pytest + unittest.mock): mock an HTTP call

# pip install pytest

import json

from unittest.mock import patch

import requests

def get_user(uid: int) -> dict:

r = requests.get(f"https://api.example.com/users/{uid}")

r.raise_for_status()

return r.json()

def test_get_user_happy_path():

with patch("requests.get") as fake_get:

fake_resp = type("Resp", (), {})()

fake_resp.status_code = 200

fake_resp.json = lambda: {"id": 1, "name": "Ada"}

fake_resp.raise_for_status = lambda: None

fake_get.return_value = fake_resp

user = get_user(1)

assert user["name"] == "Ada"Tip

Patch at the import path your code uses (

requests.getinside your module).

4) Python (responses): simulate requests without patching

# pip install responses

import responses, requests

@responses.activate

def test_profiles_endpoint():

responses.add(

responses.GET,

"https://api.example.com/profiles/7",

json={"id":7,"vip":True}, status=200

)

r = requests.get("https://api.example.com/profiles/7")

assert r.json()["vip"] is TrueWhy use it

Dead-simple HTTP mocks; also great for contract tests.

5) JavaScript (Jest): mock fetch in UI/service code

// npm i --save-dev jest @jest/globals

global.fetch = jest.fn();

test("fetches product by id", async () => {

fetch.mockResolvedValueOnce({

ok: true,

json: async () => ({ id: 99, title: "Keyboard" }),

});

const res = await fetch("/api/products/99");

const data = await res.json();

expect(data.title).toBe("Keyboard");

});Note

For browser-like behavior, consider

whatwg-fetchor test runners with DOM.

6) JavaScript (MSW – Mock Service Worker): mock APIs at runtime

// npm i msw --save-dev

import { setupServer } from "msw/node";

import { rest } from "msw";

const server = setupServer(

rest.get("https://api.example.com/orders/:id", (req, res, ctx) => {

return res(ctx.status(200), ctx.json({ id: req.params.id, total: 42 }));

})

);

beforeAll(() => server.listen());

afterEach(() => server.resetHandlers());

afterAll(() => server.close());Why MSW

Intercepts requests at the network layer; works for both tests and local dev.

7) Database mocking options

7.1 Java + H2 (in-memory) for repositories

// testImplementation("com.h2database:h2:2.+")

import org.springframework.boot.test.autoconfigure.jdbc.AutoConfigureTestDatabase;

import org.springframework.boot.test.autoconfigure.orm.jpa.DataJpaTest;

@DataJpaTest

@AutoConfigureTestDatabase(replace = AutoConfigureTestDatabase.Replace.ANY) // uses H2

class UserRepositoryTest {

// @Autowired UserRepository repo;

// write tests that persist/lookup without touching real DB

}Why H2

Fast, schema-controlled tests without staging DB.

7.2 Python + SQLite (in-memory) with SQLAlchemy

# pip install sqlalchemy

from sqlalchemy import create_engine, text

engine = create_engine("sqlite+pysqlite:///:memory:", echo=False)

with engine.begin() as conn:

conn.execute(text("CREATE TABLE users(id INTEGER, name TEXT)"))

conn.execute(text("INSERT INTO users VALUES(1,'Ada')"))

with engine.connect() as conn:

row = conn.execute(text("SELECT name FROM users WHERE id=1")).fetchone()

assert row[0] == "Ada"Why SQLite

Quick, portable, no external service.

8) Proxy-based mocking (Nginx reverse-proxy + fault injection)

nginx.conf (test-only):

events {}

http {

server {

listen 8080;

location / {

proxy_pass http://real-upstream;

# Inject 200ms for reliability testing

proxy_set_header X-Test "proxy-mock";

# Delay & error examples (requires lua/nginx modules or upstream configs)

# return 500; # simple fault

}

}

}Use case

Intercept and shape traffic without changing app code.

9) Classloader/bytecode remapping (PowerMock) — legacy edge cases

// testImplementation("org.powermock:powermock-module-junit4:2.+")

// testImplementation("org.powermock:powermock-api-mockito2:2.+")

@RunWith(PowerMockRunner.class)

@PrepareForTest(StaticUtil.class)

public class StaticUtilTest {

@Test

public void mocksStaticMethod() {

PowerMockito.mockStatic(StaticUtil.class);

Mockito.when(StaticUtil.dangerousCall()).thenReturn("safe");

assertEquals("safe", StaticUtil.dangerousCall());

}

}When to use

Static/final/legacy code that resists dependency injection.

10) API mocks for performance testing (WireMock + JMeter/Locust)

10.1 WireMock mappings for load-safe endpoints

mappings/get-user.json

{

"request": { "method": "GET", "urlPath": "/v1/users/42" },

"response": {

"status": 200,

"headers": { "Content-Type": "application/json" },

"fixedDelayMilliseconds": 40,

"jsonBody": { "id": 42, "tier": "gold" }

}

}Run WireMock standalone:

java -jar wiremock-standalone.jar --port 9090 --root-dir .

Point your JMeter HTTP Requests to http://localhost:9090/v1/users/42 to measure client behavior without hitting real upstreams (great when real APIs are rate-limited or costly).

10.2 Locust hitting a mock API

# pip install locust

from locust import HttpUser, task, between

class UserLoad(HttpUser):

wait_time = between(0.1, 0.3)

@task

def get_user(self):

self.client.get("/v1/users/42") # point to WireMockWhy this helps

You can tune latency/throughput deterministically and focus on your system’s client logic, not third-party variability. (This ties neatly to what is performance testing and broader performance testing services workflows.)

11) Fault injection patterns to test resilience

WireMock:

stubFor(get(urlEqualTo("/v1/flaky"))

.willReturn(aResponse()

.withStatus(503)

.withHeader("Retry-After","1")

.withBody("{\"error\":\"temporary\"}")));MSW:

rest.get("/v1/flaky", (req, res, ctx) =>

res(ctx.status(503), ctx.json({ error: "temporary" }))

);Use it to

Validate retries, backoff, circuit breakers.

Key Benefits of Mock Testing

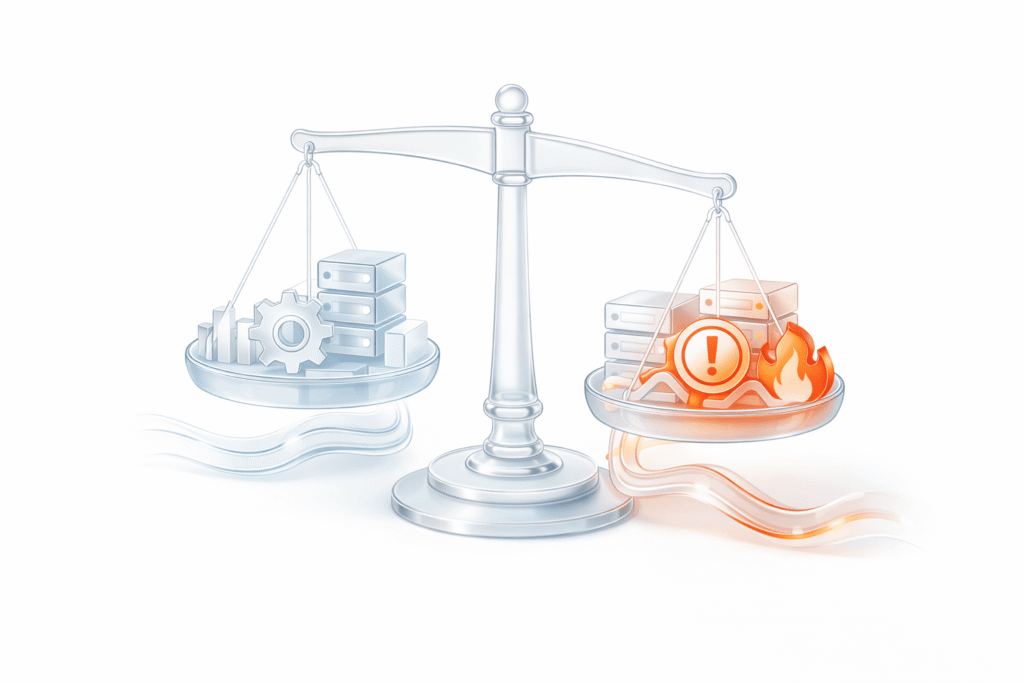

- Isolation of Components

Mock testing isolates the system under test from unreliable or unfinished dependencies. This ensures failures are linked directly to the code being validated, not hidden issues in third-party APIs or staging databases. Engineers get cleaner signals and faster debugging cycles. - Faster Development and Testing

By simulating external services, teams avoid long setup times, unstable environments, or waiting for backend readiness. This reduces cycle time and allows parallel development across frontend, backend, and QA. In CI/CD, mock suites run quickly, providing rapid feedback. - Efficiency in Performance Scenarios

Using mocks during load and stress testing helps avoid bottlenecks in upstream systems. Engineers can model latency, errors, and throughput in a controlled way, which is essential for capacity planning. This ties directly to enterprise performance testing services, where reliable baselines depend on stable, predictable test conditions. - Lower Costs

Real external services — especially APIs with usage fees — can make repeated testing expensive. With mocks, engineers simulate the same endpoints for free, reducing infrastructure and subscription costs while maintaining test quality. - Improved Scalability

Large engineering teams can run hundreds of test cases simultaneously without overloading shared environments. Mocks scale horizontally — new instances are easy to spin up, ensuring consistent testing across microservices and distributed systems.

Final Thought

Mock testing is powerful, but it isn’t a silver bullet. On its own, it solves isolation problems and accelerates feedback, yet the real value comes when it’s integrated into the full QA and delivery pipeline. In practice, mocks should coexist with integration tests, system tests, and live validation to provide confidence at every level.

Treating mock testing as just another tool in the engineer’s toolbox — not the entire toolbox — ensures you get faster feedback while still grounding your product in real-world performance. When embedded in the process end-to-end, mocks amplify reliability and reduce risk without creating blind spots.