Performance reports are packed with truth and noise in equal measure. Percentiles bend under outliers, error spikes hide between throughput plateaus, and a single mislabeled chart can derail a release meeting. AI can help, but the quality of its answers tracks the quality of your questions.

What you’ll find here is a prompt list you can drop into your workflow — useful for AI in performance testing and day-to-day AI-powered load testing alike.. It’s a set of precise, reusable prompts that reference the things engineers actually look at: P95/P99/P50, error rates, RPS, warm-up vs steady state, changes between runs, and the exact minute marks where trouble starts. Each prompt asks AI to cite evidence, stay inside a time window, and return a format you can act on — bullets, tables, or ticket stubs.

Who is this for? Performance engineers validating runs and sanity checks. SREs correlating latency with CPU, GC, or I/O. PMs turning findings into backlog and release notes. Execs who need a four-bullet answer that doesn’t dodge risk. If you’re reading a load test report right now, these prompts will help you make a confident decision.

Two quick usage rules before we dive in. First, add context: name the run, baseline, target RPS, and window you care about. Second, demand receipts: ask for timestamps and chart references so you can verify the claim in seconds. AI accelerates analysis; your judgment seals it.

How to Ask Better Questions of Your Report

AI is only as sharp as the question you ask. Good prompts for AI in performance testing give context, name the metric, constrain time, and demand evidence you can verify on a chart. Use the pattern below and swap in the variables for your run.

The pattern (copy-paste skeleton)

Context: [ENV]; run=[RUN_A], baseline=[BASELINE]; target=[RPS]; window=[WINDOW].

Task: analyze [METRIC] for [ENDPOINT or FLOW]. Check steady state before comparing.

Deliverable: 3 bullets (finding, evidence timestamp, impact) + 1 action with owner.

Guardrails: cite minute marks; note env diffs; say confidence=high/med/low.

When to use: anytime you’re interrogating an AI report (P95 shift, error burst, throughput sag).

Why it works: it fixes scope creep (time window), forces comparability (baseline + env), and produces an output you can hand to someone (bullets + owner).

Variables you’ll reuse

Sanity checks before you compare anything

Evidence-first outputs

Ask AI to show its work:

Two worked examples

Example A — PE: Investigate a P95 spike in steady state

Context: [ENV=perf-aws-c6i]; run=[RUN_A=2025-08-18-Checkout];

baseline=[BASELINE=2025-08-11-Checkout];

target=[RPS=1500]; window=[WINDOW=T+18–T+38].

Task: analyze [METRIC=P95 latency] for [FLOW=POST /checkout].

Deliverable: 3 bullets (finding with delta%, evidence timestamps, user impact) + 1 action with owner.

Guardrails: confirm steady state; note env diffs; confidence rating.

Output (example — illustrative data)

Action (owner: Payments Team): Inspect downstream payment calls and DB lock/wait metrics for T+22–T+27; add per-minute PSP latency + DB wait panels to the dashboard and rerun a 10-min focused test on POST /checkout with increased trace sampling.

Confidence: Medium-high

Example B — PM: Ship/no-ship summary for a release review

Context: [ENV=staging-r7]; run=[RUN_A=R7-rc3]; baseline=[BASELINE=R7-rc2];

target=[RPS=900]; window=[WINDOW=T+12–T+30]; SLA=[SLA=P95≤800 ms, error_rate≤1%].

Task: give 4 bullets: outcome vs SLA, top risk + timestamp, likely cause sketch, next step + owner.

Guardrails: keep under 70 words; cite minute marks; add confidence.

Output (example — illustrative data)

Outcome: P95 breached (T+18–T+22; peak 920 ms @ T+19); error_rate up to 1.3% — No-Go.

Top risk: Checkout POST /checkout tail at T+18–T+22 under steady state (~900 RPS).

Likely cause: downstream payments service or DB wait; 5xx error cluster near T+19.

Next step: Payments team to inspect logs/traces @ T+18–T+22; rerun focused test. Confidence: Medium.

Prompts for Performance Engineers (diagnostics & verification)

Verify the run, isolate the problem, and make it easy to hand off. These prompts assume you’ve set context (run, baseline, target load, window) and will demand evidence (minute marks, chart references). Copy, paste, and swap the variables.

Latency & percentiles

1) Verify a P95 jump

Use when the AI narrative hints at a latency increase and you need proof points.

Context: [ENV]; run=[RUN_A]; baseline=[BASELINE]; target=[RPS]; window=[WINDOW].

Task: analyze [METRIC=P95 latency] for [ENDPOINT or FLOW]. Confirm steady state before comparison.

Deliverable: 4 bullets (delta %, evidence minute marks, confidence) + 1 action with owner.

Guardrails: cite the exact chart and timestamps; note env differences.

2) Isolate the tail (P99 contributors)

Use to see which endpoints dominate tail pain.

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: find top contributors to [METRIC=P99 latency].

Deliverable: table endpoint | P99(ms) | calls | share_of_tail(%) | timestamps (first/peak/last); + 1 action.

Guardrails: exclude warm-up; flag endpoints with <1% of traffic.

Errors & retries

3) Status-code breakdown by endpoint

Use when error rate rises and you need a clean split by code/path.

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: break down errors by status code and [ENDPOINT].

Deliverable: table status | endpoint | count | error_rate% | first/last minute marks; + 3 bullets (pattern, peak, impact) + 1 action.

Guardrails: separate client vs server errors; surface unknowns.

4) Detect retry storms

Use to catch exponential retry cascades that inflate traffic and hide root causes.

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: detect retry patterns (same endpoint/user-flow retried within x seconds).

Deliverable: table key(flow or sampler) | attempts | window | outcome; + 2 bullets on risk + 1 action.

Guardrails: call out backoff/jitter presence if visible.

Throughput & concurrency

5) Achieved vs target load

Use to confirm the test actually hit its intended pressure.

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; target=[RPS].

Task: compare achieved RPS to target and mark ramp/steady segments.

Deliverable: table minute | target_RPS | actual_RPS | delta% | note; + 2 bullets (did we reach steady state? where?) + 1 action.

Guardrails: exclude ramp; flag minutes below 95% of target.

6) Controller bottlenecks

Use to find where concurrency is getting gated inside the plan.

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: identify controllers/samplers limiting concurrency (queues/waits).

Deliverable: 3 bullets (where, evidence, impact) + 1 action; include chart/timestamp refs.

Guardrails: note think-time/pacing that may explain the cap.

Baseline vs current run

7) Regression check (baseline table)

Use to quantify change against a known-good run.

Context: [ENV]; run=[RUN_A]; baseline=[BASELINE]; window=[WINDOW].

Task: compare P95, error_rate, throughput for key [ENDPOINT]s.

Deliverable: table metric | baseline | run | delta% | worse/better; + 2 bullets + 1 action with owner.

Guardrails: confirm steady state in both runs; call out any script changes.

8) Environment parity gate

Use to decide if comparisons are valid before you argue about deltas.

Context: [ENV]; run=[RUN_A]; baseline=[BASELINE].

Task: list environment differences affecting comparability (hardware, data volume, plugins, build [BUILD]).

Deliverable: 3 bullets + flag “safe_to_compare: yes/no” with reason.

Guardrails: if “no,” propose a re-run condition.

Data integrity / sanity

9) Duration & warm-up sanity

Use to avoid reading noise as a signal.

Context: [ENV]; run=[RUN_A].

Task: verify test duration meets plan and warm-up is excluded from analysis.

Deliverable: minute marks for warm-up end and steady-state start + 3 bullets (duration, stability, any anomalies) + 1 action.

Guardrails: cite the exact chart section analyzed.

10) Missing or rare transactions

Use to catch coverage holes that nullify conclusions.

Context: [ENV]; run=[RUN_A]; expected_flows=[list].

Task: detect missing/rare transactions versus expectation.

Deliverable: table expected_tx | observed_count | expected_count | gap% | note; + 2 bullets + 1 action (rerun or accept).

Guardrails: mention data feeders/correlation that may suppress flows.

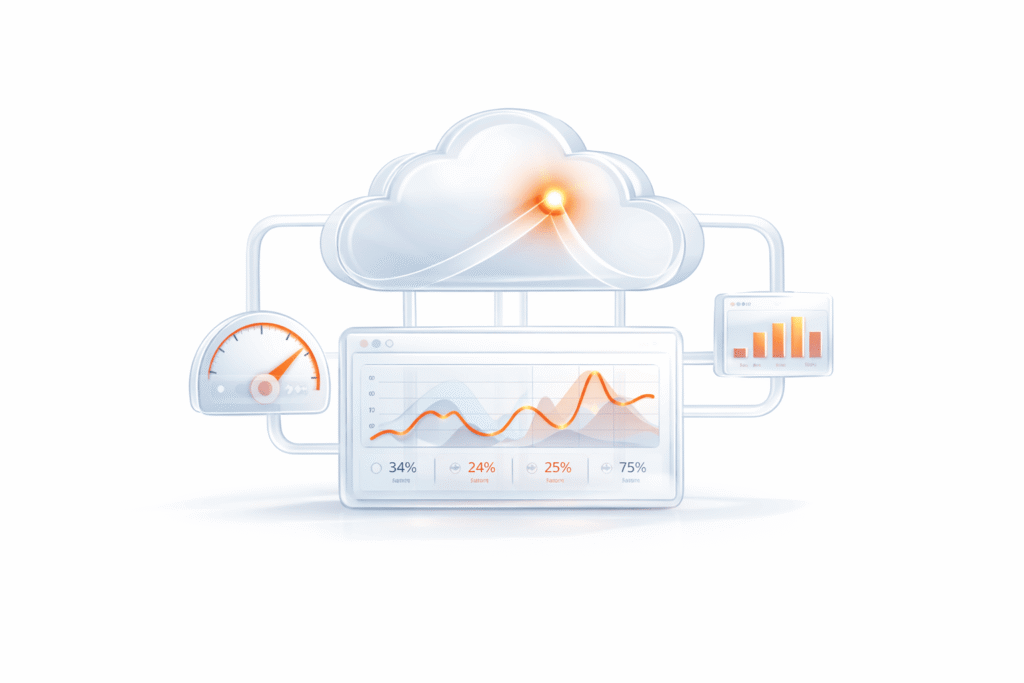

Prompts for SRE (correlate with infrastructure & capacity)

SRE prompts aim to join application symptoms with platform signals. Ask for precise timestamps so you can pivot directly to your APM/metrics.

Hot endpoints & error sources

11) Rank by tail pain

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: rank endpoints by contribution to overall P95/P99.

Deliverable: table endpoint | P95(ms) | calls | contribution_to_tail% | error_rate%; + 1 action.

Guardrails: exclude warm-up; flag endpoints with <1% traffic.

12) Map errors to upstream services

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: group errors by [SERVICE] and summarize patterns.

Deliverable: table service | status_codes | error_rate% | peak_minute | suspected_cause; + 1 action.

Guardrails: separate 4xx vs 5xx; note timeouts explicitly.

Saturation signals

13) CPU/GC/IO correlation

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; infrastrucure=[CPU,MEM,GC,IO] if available.

Task: correlate latency spikes with infrastructure metrics.

Deliverable: per-spike bullets (timestamp, metric excursion, likely bottleneck) + 1 action.

Guardrails: show chart minute marks for both app and infrastructure .

14) Queue/backpressure hints

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: detect backpressure (queue growth, timeouts, 429/503 bursts).

Deliverable: 3 bullets (where, when, impact) + 1 action.

Guardrails: call out client retry behavior if visible.

Failure timing & change events

15) Align with changes

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; changes=[deploy [BUILD], config flips].

Task: align failures/spikes with change events.

Deliverable: table time | event | metric_change | note; + 1 action.

Guardrails: mark confidence=high/med/low.

16) Timeouts & resilience

Context: [ENV]; run=[RUN_A]; window=[WINDOW].

Task: detect timeouts and presence of jitter/backoff/circuit-breaking.

Deliverable: table endpoint | timeout_rate% | avg_attempts | backoff? yes/no; + 1 action.

Guardrails: cite minute marks for representative samples.

Capacity & headroom

17) SLA edge

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; SLA=[P95<=Xms, error_rate<=Y%].

Task: estimate sustainable RPS where SLA still holds; provide headroom%.

Deliverable: method summary + headroom estimate + 1 action.

Guardrails: state assumptions (traffic mix, cache state).

18) Scale hint

Context: [ENV]; run=[RUN_A].

Task: suggest whether vertical or horizontal scaling likely helps, and why.

Deliverable: 3 bullets mapping symptom→resource (CPU-bound, IO-bound, lock contention) + 1 action.

Guardrails: avoid prescriptive fixes without evidence timestamps.

Prompts for Execs (four-bullet summaries)

Keep it crisp. The goal is status, risk, cause sketch, next step — grounded in time-stamped evidence.

19) One-screen summary

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; SLA=[SLA].

Task: 4 bullets: result vs SLA, top risk + timestamp, likely cause sketch, next step + owner.

Guardrails: ≤70 words; include confidence.

20) Business impact

Context: [ENV]; run=[RUN_A]; journeys=[login/search/checkout or API].

Task: 4 bullets linking findings to journeys; add rough impact if known.

Guardrails: cite minute marks; avoid speculative numbers.

21) Go/No-Go

Context: [ENV]; run=[RUN_A]; SLA=[SLA].

Task: declare Go/No-Go/Conditional; list ≤3 must-fix items with timestamps; 1 closing risk sentence.

Guardrails: include confidence and next check time.

22) Trend vs last release

Context: [ENV]; run=[RUN_A]; baseline=[BASELINE]; window=[WINDOW].

Task: 4 bullets on P95, error_rate, throughput, confidence; + 1 action.

Guardrails: confirm steady state in both runs.

23) Risk heatmap (verbal)

Context: [ENV]; run=[RUN_A].

Task: describe a simple risk heatmap by endpoint (high/med/low) with one-liners.

Guardrails: ≤80 words; cite at least one timestamp for “high.”

24) Cost to remediate (rough)

Context: [ENV]; run=[RUN_A].

Task: 3 bullets estimating effort tiers (quick <1d, medium 2–4d, larger 1–2w) tied to top issues.

Guardrails: state assumptions and owner role.

Prompts for PMs (risk, SLAs, prioritization)

Translate technical findings into plans, criteria, and scope moves.

25) SLA deltas

Context: [ENV]; run=[RUN_A]; window=[WINDOW]; SLA=[SLA].

Task: list where we miss SLA and by how much.

Deliverable: table endpoint | actual | SLA | delta | user_impact; + 1 action.

Guardrails: include minute marks for breaches.

26) Prioritization

Context: [ENV]; run=[RUN_A].

Task: turn top 3 findings into backlog tickets (title, AC, owner, due_by).

Deliverable: checklist format.

Guardrails: each AC must be measurable.

27) Scope trade-offs

Context: [ENV]; run=[RUN_A]; goal=[meet SLA by launch].

Task: propose 3 descopes to hit SLA (e.g., feature flag X at traffic Y); include pros/cons.

Guardrails: note user impact and rollback plan.

28) Release notes

Context: [ENV]; run=[RUN_A]; baseline=[BASELINE].

Task: draft a concise performance section: 3 bullets on improvements/regressions with numbers + chart links.

Guardrails: avoid vague terms; include confidence.

29) User journey mapping

Context: [ENV]; run=[RUN_A]; journeys=[auth → browse/search → add-to-cart → checkout].

Task: 4 bullets, each with P95 & error_rate snapshot + timestamp.

Guardrails: confirm traffic volume per step.

30) Acceptance criteria refresh

Context: [ENV]; run=[RUN_A]; current_SLA=[SLA].

Task: propose updated acceptance criteria.

Deliverable: table metric | current | proposed | rationale; + 1 action.

Guardrails: keep thresholds testable in CI.